How did the database project begin?

The history of the book was developed to take into account phenomena related to written works, as much in their cultural as in their economic or political dimensions; for this reason, it has always privileged quantitative approaches. The early work by Daniel Mornet in Les Origines intellectuelles de la Révolution française (1933), or Lucien Febvre and Henri-Jean Martin in L’Apparition du livre (1999/1958) already conveyed the desire to comprehend vast corpora rather than be limited to a few canonical titles. However, as of the 1990s, the use of databases transformed the discipline by facilitating the quantitative process and multiplying its possibilities.

In Quebec, the members of the Groupe de recherche sur l’édition littéraire au Québec (GRÉLQ),1 founded in 1982, used various databases since the beginning, and they themselves designed most of these databases. Such is the case notably with publishers’ catalogues which served as a platform for the major Histoire de l’édition littéraire au Québec au xxe siècle project (History of literary publishing in twentieth-century Quebec). Conceived by literary scholars at a time when the use of such tools was not yet commonplace, the history of these catalogues translates into, in a certain way, the history of the beginnings of digital humanities.

Led by Jacques Michon and Richard Giguère, the first works by the GRÉLQ members focused on literary publishing in Quebec during World War II, which was a particularly fertile era in terms of publishing (Michon 2004) considering that as of 1940, French and Belgian publishers, paralyzed by the Occupation, were in no position to supply foreign francophone markets. In Quebec, where more than three quarters of production was imported from Europe, the scarcity of books hit hard. However, exceptional measures2 stipulating the enforcement of laws governing copyright in times of war were put in place to allow Québécois publishing houses to offset these shortages. Because there were not enough existing companies to cope with the task, new publishing houses came into being. Their production took three forms: republishing3 existing titles; publishing texts produced by Québécois authors; and publishing the texts of writers in exile. In addition to bookshops and local institutions, Québécois publishers served American, African, and South American markets. At the end of the war, however, European companies reconnected with their foreign clientele. Deprived of their outlets, the majority of Québécois publishers were forced to abandon the profession.

It was in order to recall this significant episode in the history of the book that GRÉLQ researchers set to work. They rapidly encountered a stumbling block, though, because in Quebec the systematic inventory of national production did not begin until the end of the 1960s.4 Compelled to retrace the corpus of books published during the war, the researchers decided at that time to build digital catalogues using various sources in order to facilitate their processing.

How was the corpus defined?

At first centered on literary works produced during World War II, the work of the researchers extended to covering the beginning of the twentieth century through to the 1980s. The majority of the catalogues of Québécois publishers active during this period were reconstituted. Publications were located among private and institutional collections, especially those of university, municipal, and government libraries. Faced with an absence of publishing archives, researchers also used secondary sources such as journals, magazines, reviews, and advertising material (on new publications, promotional catalogues, etc.). Finally, numerous interviews were conducted with publishers who were still alive at the time. Produced in order to record and analyse this mass of documents, computerized indexes, interviews, bibliographies, and digitalized references are still today made available to researchers.

Is the design of the database supported by a specific theory and/or discipline?

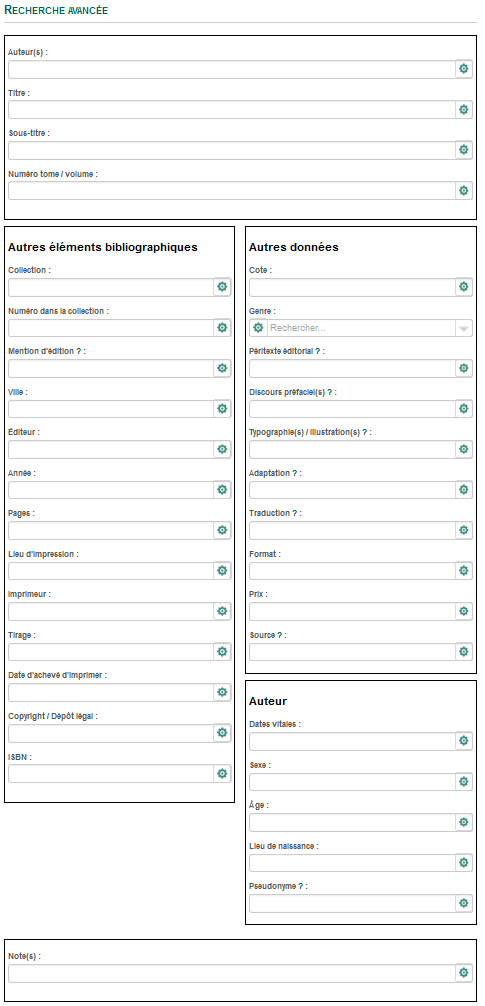

The catalogues of publishers were conceived according to a traditional bibliographical model adapted to the needs of the researchers. The image below provides a general idea of this model in its current form:

Beside the customary bibliographical fields (author, publisher, date of publication, etc.) rubrics were added containing information associated with the medium (binding, format), paratext (statement of responsibility, illustration, prefaces, etc.), manufacture (printer, print run, re-publication, re-print), circulation (price, point of sale), distribution (name of distributor), and with reading (ex libris, imprimatur, etc.). Each description was entered using one or several copies of the book, each copy capable of presenting a variety of elements, which sometimes hadn’t even been published before in the case of annotated copies. The catalogues thus fulfill certain analytical bibliographical requirements (Bowers 1950), even if they do not achieve the degree of precision required for the description of manuscripts and rare books.

The model also includes information concerning genre and details about authors. The genre indicators are particularly useful, making it possible to trace the evolution of the catalogue with regards to literary categories (novel, theatre, poetry, etc.) and of publishing sectors (religion, children, literary, etc.) The fields for specialization and publishing house strategies are, in this way, apparent. For example, while the Éditions Variétés republished classics and books for children, the Éditions de l’Arbre privileged essays and novels of local and exiled authors. The biographical information, for its part, offers the portrait of a group that includes all authors, from the most renowned to the most obscure. Their quantitative processing can disclose the existence of networks according to age, sex, profession, or nationality. It also reveals, in the case of republished works, authors who left their mark on their era even if today they have been forgotten.

Which software programs were used to build the database infrastructure and, as the case may be, to treat the data statistically?

The development of the publishers’ catalogues was spread out over three decades, from the 1980s through to the 2000s. After a few attempts, researchers opted for File Maker software, which responded to their immediate needs and was simple to learn and use. Because their financial resources were limited, they had no choice but to manage without the help of a computer technician.

The catalogues were produced separately, the purpose from the start being to produce monographs for each publishing house. Over time, certain researchers adapted the model by adding rubrics or automatic data-entry elements. In doing so, however, they compromised the uniformity of the catalogues and, consequently, ruined the possibility of searching them simultaneously. Furthermore, the fact that several people proceeded to enter data, multiplied the chances of mistakes being made. Other difficulties related to the use of File Maker occurred as and when new versions of the software were released. The obligation to continually purchase new licences quickly became a serious constraint. The obsolescence of the early versions also became a source of concern since the transfer of data from one version to the next always presented a risk of corruption or loss of data. However, the main pitfall remained the impossibility of making these databases available online. By the mid-2000s, the sharing of data had become a priority in research and the creation of online platforms where databases could be stored, had become a necessity.

It was then that the decision was made to no longer use File Maker and to change over to MySQL. Most of the documentary databases, that is, the indexes, bibliographies, and references, were thus converted and made available online. In the case of publishers’ catalogues, a revision and standardization project was undertaken, but could not be completed due to a lack of resources. The most complete catalogues as well as those considered to be the most pertinent for research were nonetheless transferred, despite their gaps and any errors they might contain. A clear benefit is the fact that it is now possible to search all catalogues simultaneously and even integrate all databases into one search in order to cross-reference the results.

Could you offer one or two examples of scientific (whether consensual or surprising) results obtained with the help of the database?

The publishers’ catalogues have enabled the creation of a number of monographs in the form of articles, dissertations, theses, and book chapters. The list of these documents shows how rich these catalogues have proven to be. They are also at the heart of the Histoire de l’édition littéraire au Québec au xxe siècle (Michon 1999, 2004, 2010), a brilliant synthesis that remains an indispensable reference in the field. But the catalogues have served other purposes. Data relating to the paratext has been used among others by Marie-Pier Luneau, who was interested in prefatory discourse (2016), and Sophie Drouin whose thesis in progress focuses on illustrations. Other applications are envisaged, notably in geolocalization.

Publishers’ catalogues are still relevant today because, up until now, no other bibliographical tool records the production of publishers who were active prior to the 1960s. Nevertheless, their development depends on financial resources allotted to research and, in this sense, their perpetuity is certainly not guaranteed. But their development also depends on the willingness of researchers to explore all the possibilities that they offer, and from this perspective the future remains promising.